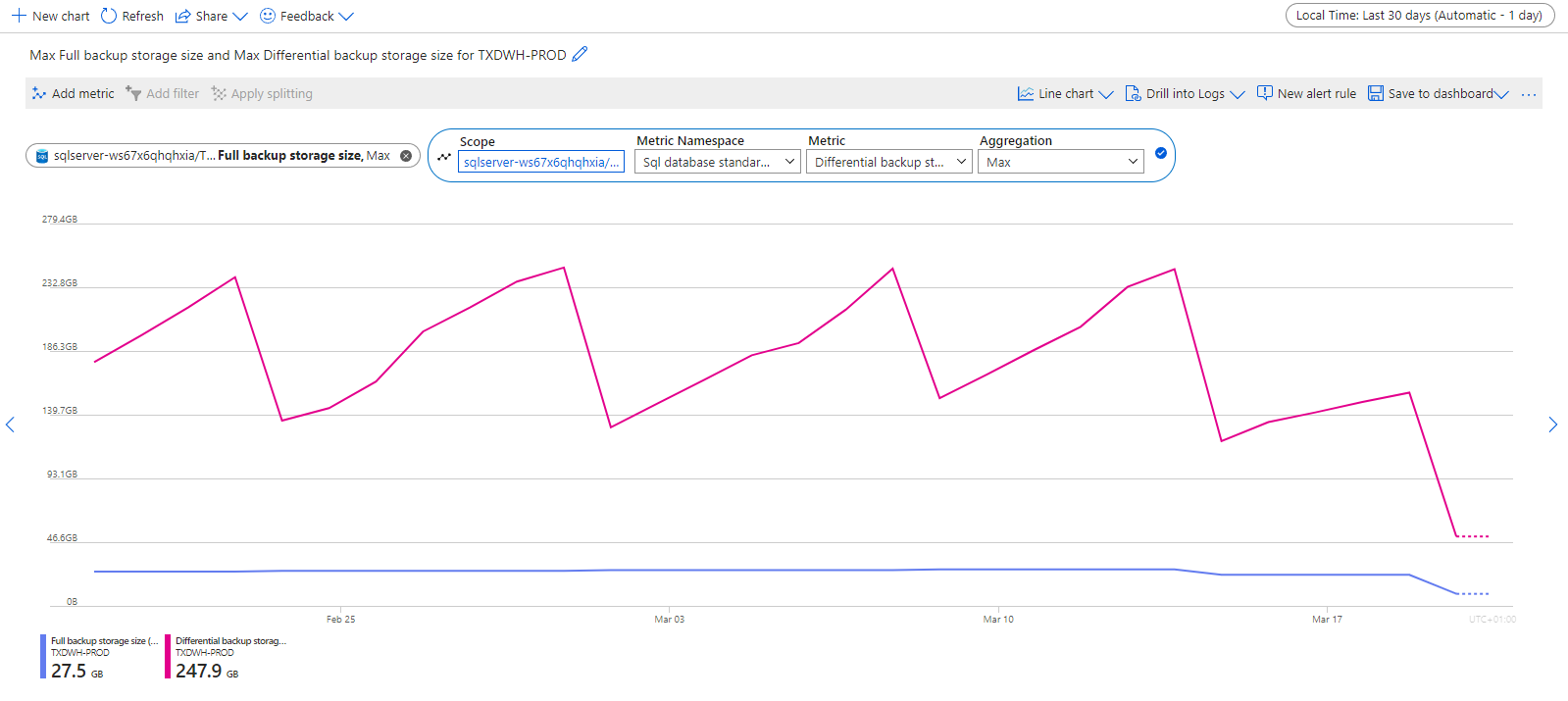

Recently we identified that our Azure SQL Database Backup Costs for our TimeXtender environment are very high, almost even higher then the regular storage costs itself. We now try to minimize these Backup Costs. We already minimized the Backup Retention policies:

Now, when analysing some Backup data in the Azure Portal we found that the Log Backup Storage Size is growing the the multiple TeraBytes, even though our PROD database uses around 28GB.

Does anybody know how we can further analyse and minimize these backup costs and data? I found the following regarding the transaction log, but I can't seem to dive in the transaction log/size per table or execution for example.

--------------------------------------------------------------------------------------------------------------------

In Azure SQL Database, transaction logs record changes made to the database to ensure data durability and provide for features like point-in-time restore and disaster recovery. Various operations within Azure SQL Database can trigger changes that are logged in the transaction log. Here are some common operations that trigger transaction log entries:

-

Data Modifications: Any INSERT, UPDATE, DELETE, or MERGE operation on tables or indexed views triggers changes that are logged in the transaction log.

-

Schema Modifications: Changes to the database schema, such as creating or dropping tables, altering table structures, adding or removing columns, or modifying constraints, are logged in the transaction log.

-

Index Operations: Creating, dropping, rebuilding, or rebuilding online indexes also trigger changes that are logged in the transaction log.

-

Transaction Control Statements: BEGIN TRANSACTION, COMMIT TRANSACTION, ROLLBACK TRANSACTION, SAVE TRANSACTION, and other transaction control statements are logged in the transaction log to maintain transactional consistency.

-

Data Definition Language (DDL) Statements: DDL statements such as CREATE, ALTER, and DROP for database objects (tables, views, procedures, functions, etc.) are logged in the transaction log.

-

Data Manipulation Language (DML) Statements: DML statements such as SELECT INTO, TRUNCATE TABLE, and others can also trigger transaction log entries.

-

System Operations: Various system operations and administrative tasks, such as database backups, log backups, database restores, and database copies, trigger changes that are logged in the transaction log.