Hi,

We are currently trying to figure out the new gen of Timextender, and I’m finding the setup with jobs a bit lacking. Perhaps I’ve just missed something, but here are a few things that bug me.

As far as I can understand, the way to go when scheduling in the DW is to set up your execution packages in the execution tab. Then we need to set up a Job that schedules the execution packages.

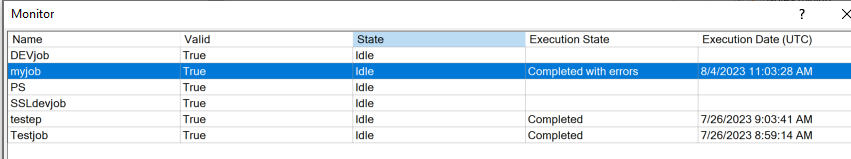

In my trials I intentionally set up a package to fail, and first of all the monitoring view of jobs do not show any information about the error:

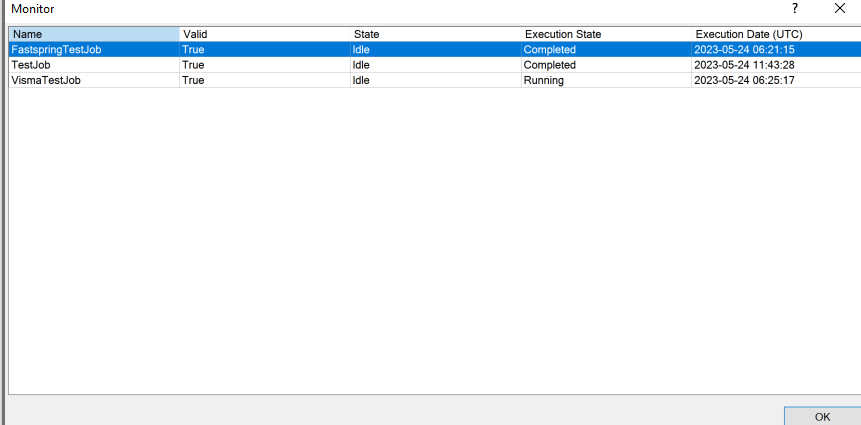

If I go into the execution log, I can see an error message that essentially just says that the job didn’t succeed.

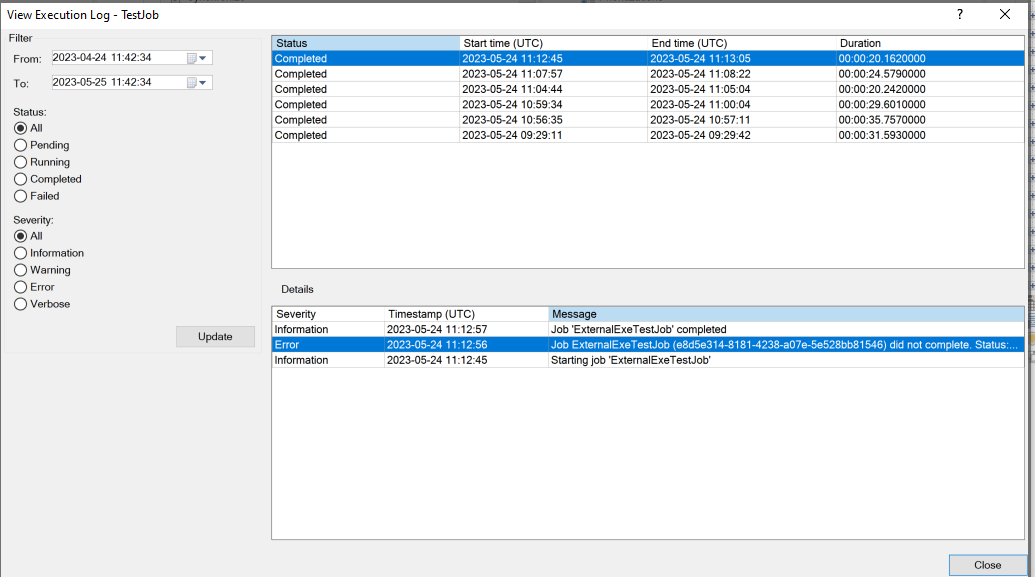

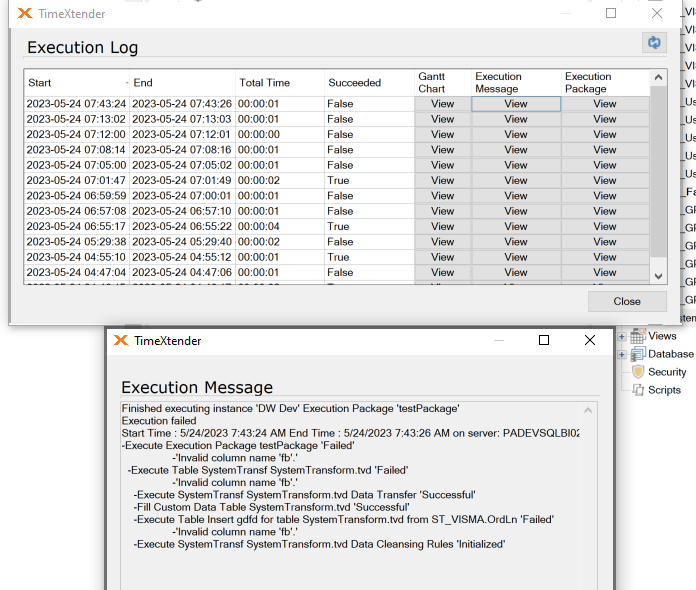

For debugging that means I have to check the contents of the job (that potentially could have multiple execution packages in it) and then head over to the execution tab to check the log for the package, where I see all the details.

I would think it was nice to be able to reach the execution message(s) straight from the Jobs log. And by extension it would be super nice if this information was available by the timextender API as that allows us to create a monitoring setup for the DW.

So, am I doing jobs wrong or would it be a good idea to increase the level of detail in the Job logs? As it is now I would much rather have the API track the logs for execution packages as they contain the most relevant information about flows in the DW.

Best,

Joel