Hello,

We are seeing an issue with a Enhanced Data Source TimeXtender REST (1.9.0) data source in TimeXtender where decimal values are being interpreted incorrectly during ingestion.

We have two datasets based on the same AFAS source:

-

one loaded through a REST connector in TimeXtender

-

one loaded through a PowerShell script that retrieves the same REST data and exports it to CSV, which is then loaded into TimeXtender

The CSV route shows the correct values for the field Aantal_FTE, for example:

-

0.18

-

0.25

-

0.55

-

0.95

-

1.00

However, the same field loaded directly through the TimeXtender REST connector shows incorrect values such as:

-

18

-

25

-

55

-

95

-

375

-

625

-

675

It appears that decimal values are already being misinterpreted in REST.rows, before the data reaches the staging table.

For example:

-

0.95becomes95 -

0.375becomes375 -

0.675becomes675

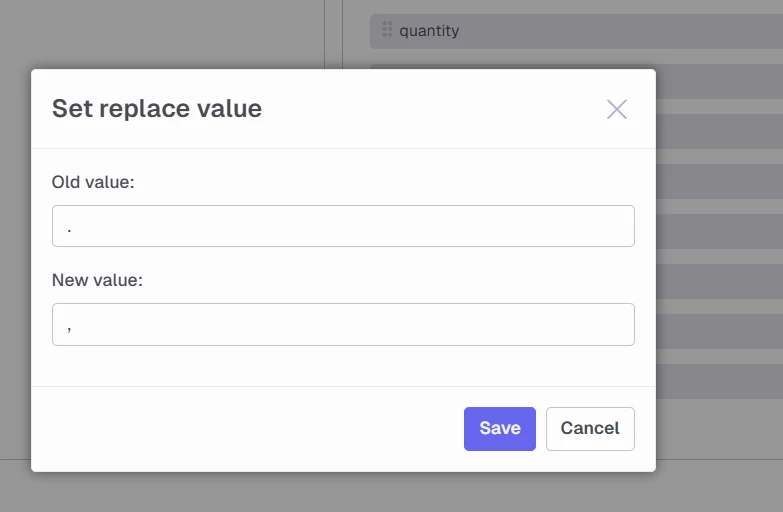

This suggests that the decimal separator is being lost during REST parsing.

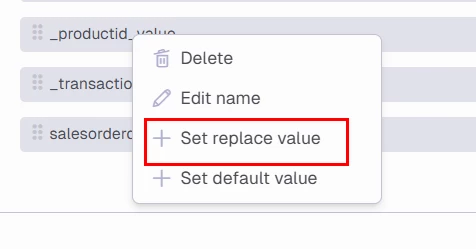

Additional observations:

-

In the REST source preview, the incorrect values are already visible in

REST.rows -

The field is initially detected as

Decimal -

We also tested a datatype override from

DecimaltoVarchar, but this had no effect -

This confirms the issue occurs before the SQL staging table is created

The PowerShell script uses Invoke-RestMethod against the same AFAS endpoint and exports the returned rows to CSV. That output is correct, which suggests the issue is specific to the TimeXtender REST parsing layer and not the AFAS source itself.

Could you please investigate whether this is a known issue with decimal parsing / locale handling in the REST connector?

If needed, I can provide screenshots showing:

-

correct output through the CSV route

-

incorrect output in

REST.rows -

the datatype mapping in TimeXtender

Thank you.

Best regards,

Patrick Ruijter