Hi,

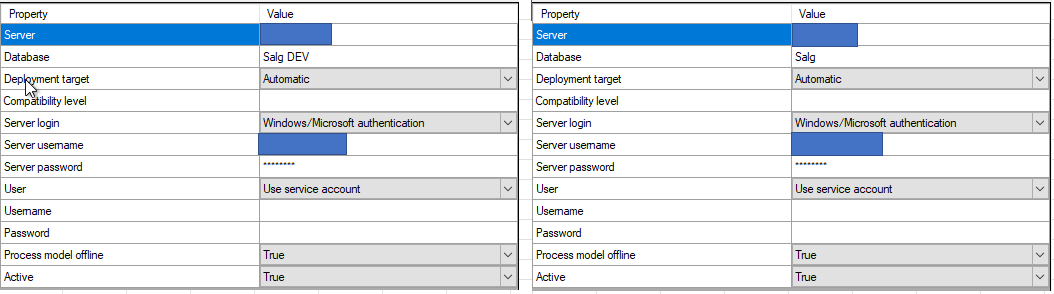

I have created a semantic model in our development environment with a global database,

In development the model is named Sales DEV and in production it is named Sales.

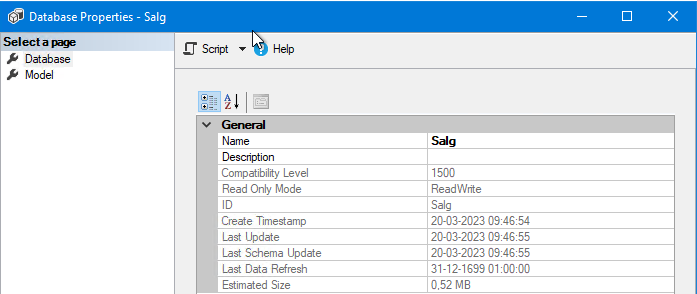

When I make changes to the model and do a multiple environment transfer, the data in the production model is deleted and the users cannot use the model before I have executed the model in the production environment.

I expected a creation of a offline model, which could be executed at a later time.

Instead our users cannot access data in a period of time, which is unacceptable to our business.

How can I avoid this, so our users do not experience down time on the production model?

Kind regards,

Rasmus Høholt