Prerequisites

- ODX Server machine has access to the URLs: *.googleapis.com/*

- Profile: 9-digit number connected to the GA profile you want to collect data from

- Property/Properties: 9-digit number relating to the property (e.g. website) you will collect data from. You will need a separate data source for each property (I think).

- Account with admin privileges in the API for granting access as well as authenticating OAUTH

Migrating Universal Analytics to V4

If you already have a connection to GA Universal Analytics with the CDATA for Google Analyics 2022 Provider, setting up the connection to V4 will be easy. I recommend creating a new CDATA data-source for V4, so that you can compare the tables from Universal Analytics and V4. The structure of the data model has changed significantly!

- Copy connection string from GA Universal CDATA data-source

- Create a new data source with the GA CDATA connector and version

- Import connection string

- Change the version to V4 at ‘Authentication’ → ‘Schema’ as well as ‘Schema’ → ‘API Version’

- Add property ID in the miscellaneous section

OAUTH will work seamlessly from one version to another, so you can save the OAuth settings file to the same location as the old connector. As far as the connection settings are concerned, you are now finished in TimeXtender!

GA V4 without migrating

You can follow the above guide and implement steps 4 & 5 from the migration guide in the connection settings before authenticating the connection in the Thomas’ guide. Concisely:

- Follow the CDATA guide for setting up connection (client secret etc.) CData ADO.NET Provider for Google Analytics - Establishing a Connection

- Add a new CDATA Google Analytics connection and set the profile, client secret and ID. Choose a suitable location for the OAuth settings file!

- Follow steps 4 & 5 in the migration guide above

- Authenticate OAuth using an account with the rights to do so

Enabling API access

Most of the tables in the API will require the API to be turned on in the project. You will get the errors:

[500] Could not execute the specified command: Google Analytics Admin API has not been used in project 0000000000 before or it is disabled. Enable it by visiting https://console.developers.google.com/apis/api/analyticsadmin.googleapis.com/overview?project=0000000000

Once you fixed that error, you will get the error:

[500] Could not execute the specified command: Google Analytics Data API has not been used in project 0000000000 before or it is disabled. Enable it by visiting

https://console.developers.google.com/apis/api/analyticsdata.googleapis.com/overview?project=00000000000

Either click on the links as they come and click ‘Enable’ or preventatively visit these links, substituting your own project ID.

Synchronizing source

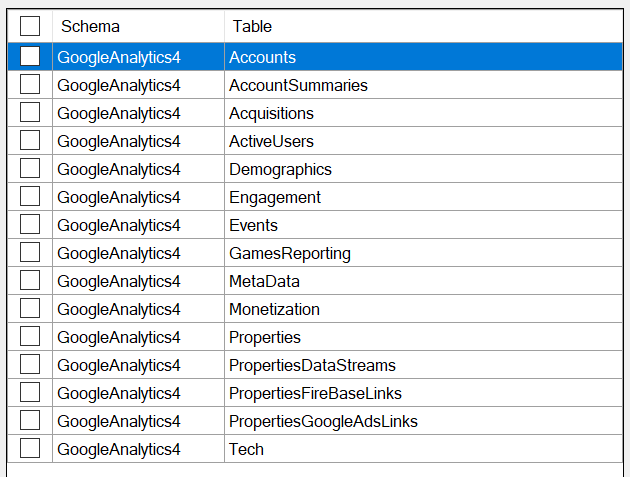

Now it's time to see which tables CDATA synthesizes for us from the entangled webs of the GA API. There is an extensive list of ‘views’ on the CDATA documentation website: CData ADO.NET Provider for Google Analytics - Views

However, I have only been able to find the following views:

update July 2024: there have been many more tables added to the CDATA provider since publishing of this article. The ‘global_access_object’ has also been reintroduced for v4.

Similarities and Differences to Universal

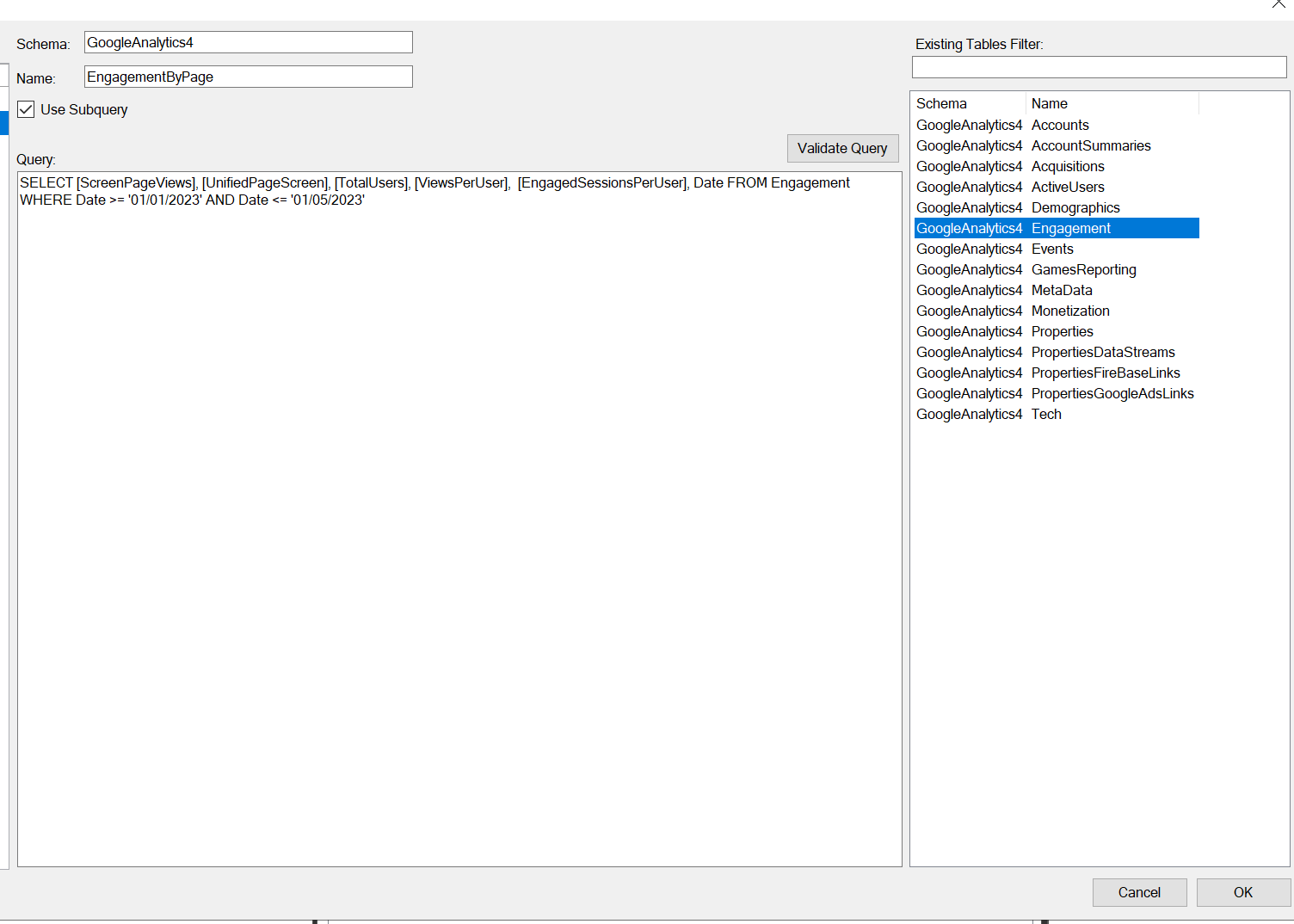

Similarly to Universal Analytics, we need to create Query Tables to extract meaningful data from the above tables. For example, if we just load the table ‘engagement’ from the provider, we will get a table with 25 columns and a single record. All dimensions are null. However, the following query table fetched me > 5.000 records by date:

SELECT

[ScreenPageViews]

, [UnifiedPageScreen]

, [TotalUsers]

, [ViewsPerUser]

, [EngagedSessionsPerUser]

, [Date]

FROM [Engagement]

WHERE 1=1

AND [Date] >= '01/01/2023'

AND [Date] <= '01/05/2023'update July 2024:

You can now use the global_access_object to select any combination of dimension or metric. Use https://ga-dev-tools.google/ga4/dimensions-metrics-explorer/ to explore the possible metrics and dimensions in GA4.

Some dimensions and metrics that could be combined in UA can no longer do so in G4. Under the hood there are also significant differences in attribution to key events like transactions. You can specify dimensions such as ‘sessionMedium’ or ‘FirstUserMedium’ to choose the attribution you want.

Query Tables

For those of us who have not worked with Query Tables in TimeXtender before, here is a quick guide:

- Right click on the data source you want to add the table to

- Click ‘Manage Query Tables’

- Type a schema name. For example, you could use the same schema as the rest of the tables in the data source

- Set a table name

- Drag the table you want to use as the base for your table from the pane on the right to the query editor. That way you can see which fields exist

- Now you can modify the query and click ‘OK’

- Make sure the table is selected, run the synchronize task, and make sure the table in selected in the transfer task

- Run the transfer task

- Marvel at your handy work!

If the devs are listening, it would be GREAT to improve this experience. I think we can all agree that formatting of queries should be persistent after saving, and the column names should be available in the pane on the right for building the query. This experience can be tedious, and it is easy to make mistakes in the query, so remember that there is a validate query button for testing.

Available CDATA filters

Certain filters can be included in the WHERE statement in your query table and will be interpreted by CDATA and sent to the API in the appropriate format. The list below is probably not exhaustive. If you find some other filters that work, let me know! Also, some filters may do nothing depending on the selected fields.

All tables accept ‘PropertyID’ in the where clause, but IMHO it's better to define it in the data source and have a data source for each property. All filters only accept ‘=’ (e.g. [Field] = ‘String’) except for date, which accepts ‘=’, ‘>=’, ‘<=’, ‘<’, ‘>’. Note that date filters defined here happen client-side.

If you want to adjust the date range your are grabbing from GA4, use the ‘Default Filters’ option in the Miscellaneous section of the CDATA settings. Format is as follows: StartDate='2023-01-01';EndDate='Today'. The dates can be formatted as a date, an integer n (representing today - n days) or as ‘Today’.

Not that the following list is no longer up to date!

You can find more information in de CDATA documentation for the GA connector:

CData ADO.NET Provider for Google Analytics - Changelog

Engagement

The ‘Engagement’ CDATA table accepts the following filters:

- Date

- ContentGroup:

- EventName:

- UnifiedScreenClass

- UnifiedScreenName

- PagePath

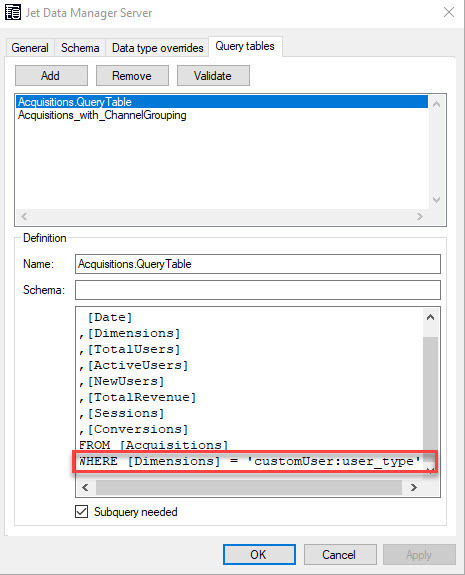

Acquisitions

The ‘Acquisitions’ CDATA table accepts the following filters:

- Date

- FirstUserGoogleAdsAdGroupName

- FirstUserGoogleAdsNetworkType:

- FirstUserMedium:

- FirstUserSourcePlatform:

- FirstUserSource:

- SessionDefaultChannelGrouping

- SessionMedium

Demographics

The ‘Demographics’ CDATA table accepts the following filters:

- UserAgeBracket

- Country

- UserGender

- BrandingInterest

- Language

- Region

Monetization

The monetization CDATA table accepts the following filters:

- AdFormat

- AdSourceName

- AdUnitName

- PagePath

Please share any additional knowledge, tips, or tricks you find below so that I can incorporate them into this guide!

--

Andrew - BI Consultant @ E-mergo