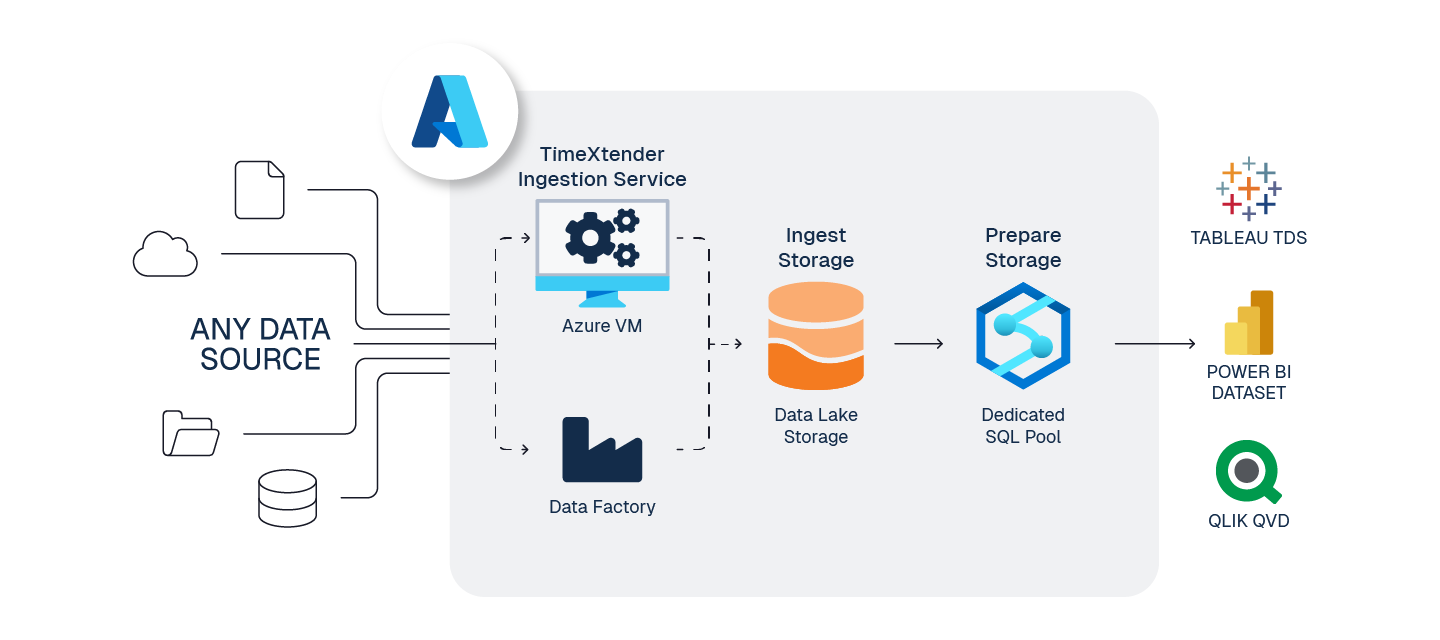

This is a reference architecture to implement TimeXtender Data Integration for Prepare Storage using Azure Synapse Dedicated SQL Pool, for maximum performance as data becomes very big (for example, when data is at least 1 TB, or with tables of more than 1 billion rows). Dedicated SQL Pool uses Massively Parallel Processing (MPP) architecture which distributes processing across multiple compute nodes, allowing for very performant analytics queries. For more information, please see "When should I consider Azure Synapse Analytics?", in the article What is Azure Synapse Analytics?.

Note: that a similarly named Azure Synapse service, Serverless SQL Pool, cannot store data but is only used for high performance, low-cost queries on the Azure Data Lake Storage (Gen2) resource associated with the Synapse resource. For more information, please see Query Ingest Parquet files with Azure Synapse Workspace.

To prepare your TimeXtender Data Integration environment in Azure, here are the steps we recommend:

1. Create Application Server - Azure VM

To serve the TimeXtender Data Integration application in Azure, we recommend using an Azure Virtual Machine (VM), sized according to your solution's requirements.

Guide: Create Application Server - Azure VM

Considerations:

- Recommended Sizing: DS2_v2 (for moderate workloads). See Azure VM Sizes documentation for more detail.

- If Azure VM (App Server) hosts the Ingest Service, it must remain running for TimeXtender Data Integration to run.

- This VM will host the below services to run TimeXtender Data Integration.

- TimeXtender Ingest Service

- TimeXtender Execution Service

2. Create Storage for Ingest instance - Azure Data Lake Storage Gen2

ADLS Gen2 is highly performant, economical, scalable, and secure way to store your raw data.

Guide: Create Storage for Ingest instance - Azure Data Lake Storage Gen2

Considerations:

- When creating the ADLS Gen2 data lake service, you must enable Hierarchical Namespaces

- TimeXtender Data Integration writes files in Parquet file format, a highly compressed, columnar storage in the data lake.

- It is possible for Ingest instances to store data in Azure SQL DB (rather than in a data lake), but this adds cost and complexity but no additional functionality

- When using Azure Data Lake for the Ingest instance and SQL DB for the Prepare instance, it is highly recommended to use Data Factory to transfer this data.

- ADLS will require a service principle, called App Registration in Azure, for TimeXtender Data Integration to access your ADF service.

- Both Data Lake and ADF, may share the same App Registration if desired.

3. Prepare for Ingest and Transport - Azure Data Factory (optional)

For large data movement tasks, ADF provides amazing performance and ease of use for both ingestion and transport.

Guide: Prepare for Ingest and Transport - Azure Data Factory (recommended)

Considerations:

- When creating ADF resources use Gen2, which is the current default

- A single ADF service can be used for both transport and ingestion

- Ingestion from data source to Ingest instance storage

- Transport from an Ingest instance to a Prepare instance

- The option to use ADF is not available for all data source types, but many options are available.

- ADF Data sources do not support Ingest Query Tables at this time.

- ADF's performance can be quite costly for such incredible fault-tolerant performance

- ADF will require a service principle, called App Registration in Azure, for TimeXtender Data Integration to access your ADF service.

- Both Data Lake and ADF, may share the same App Registration if desired.

4. Create Storage for Prepare instance- Azure Synapse SQL Pool (SQL DW)

As your organizations data grows and performance is a key consideration Azure Synapse SQL Pool, a massively parallel processing database, can be a great option for your data warehouse storage at scale.

Guide: Use Azure Synapse SQL Pool (SQL DW)

Considerations:

- Recommended Synapse Use Case Criteria:

- More than 1 TB of data

- Tables with at least 1 billion rows

- Data Warehouse tuning is needed to achieve max performance

- Data tables are split up across 60 distributed nodes, in a process called sharding. There are three types of distributions (Round-robin, Replicated, and Hash), each with ideal use cases.

- TimeXtender Data Integration automatically switches to PolyBase for transport when using Synapse for Prepare instance storage.

Note: the user interface will not reflect this change has been made.

5. Configure Power BI Premium Endpoint (Optional)

If you have Power BI Premium, deploy and execute Semantic Models within Deliver instances directly to the Power BI Premium endpoint.

Guide: Configure PowerBI Premium Endpoint (Optional)

6. Estimate Azure Costs

Balancing cost and performance requires monitoring and forecasting of your services and needs.

Guide: Estimate Azure Costs

Considerations:

- Azure provides a pricing calculator to help you estimate your costs for various configurations

Note: this Azure pricing calculator does not include the cost of TimeXtender instances and usage